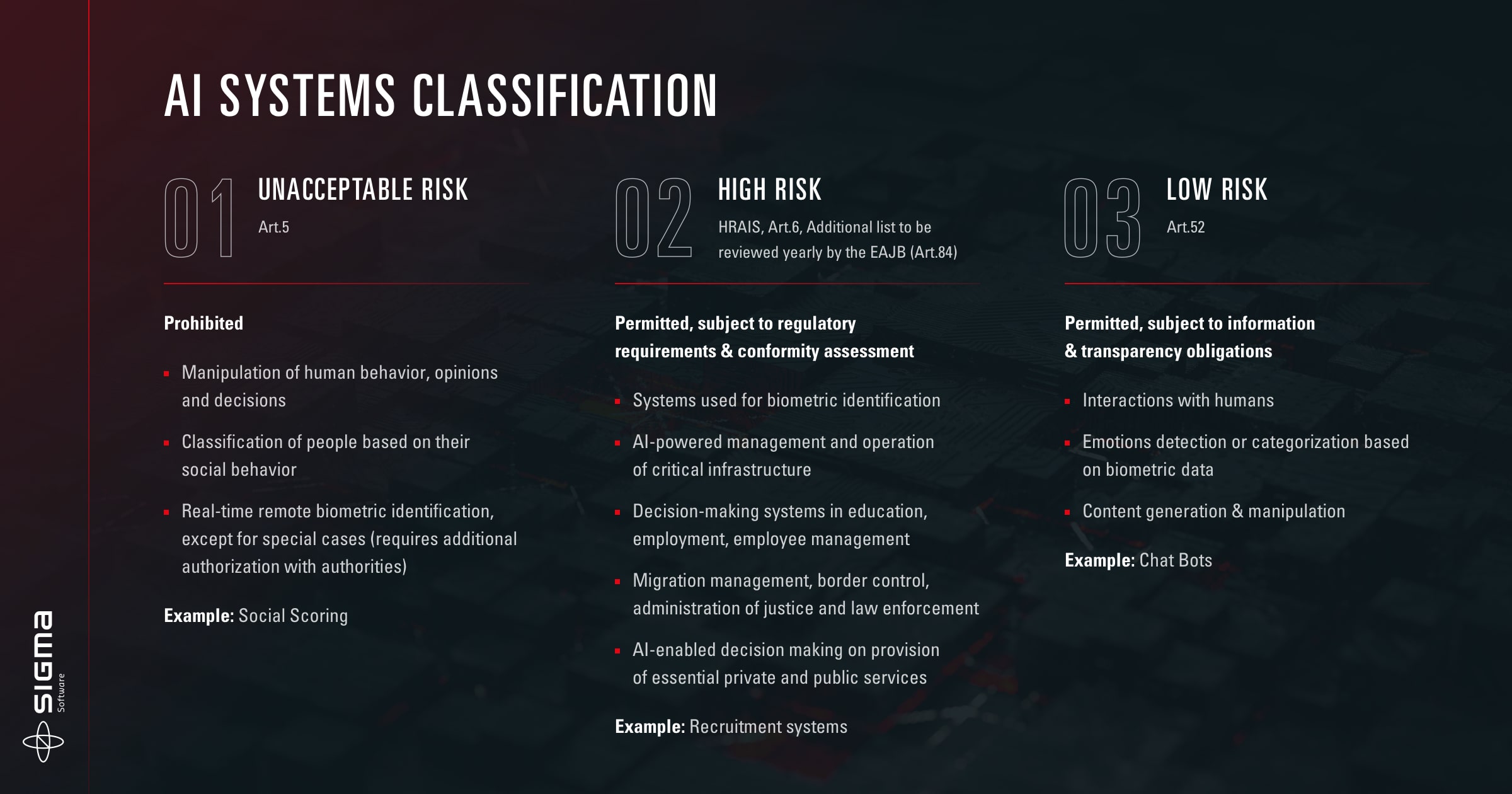

Who will be affected by AI legislation?

What countries are subject to AI regulations?

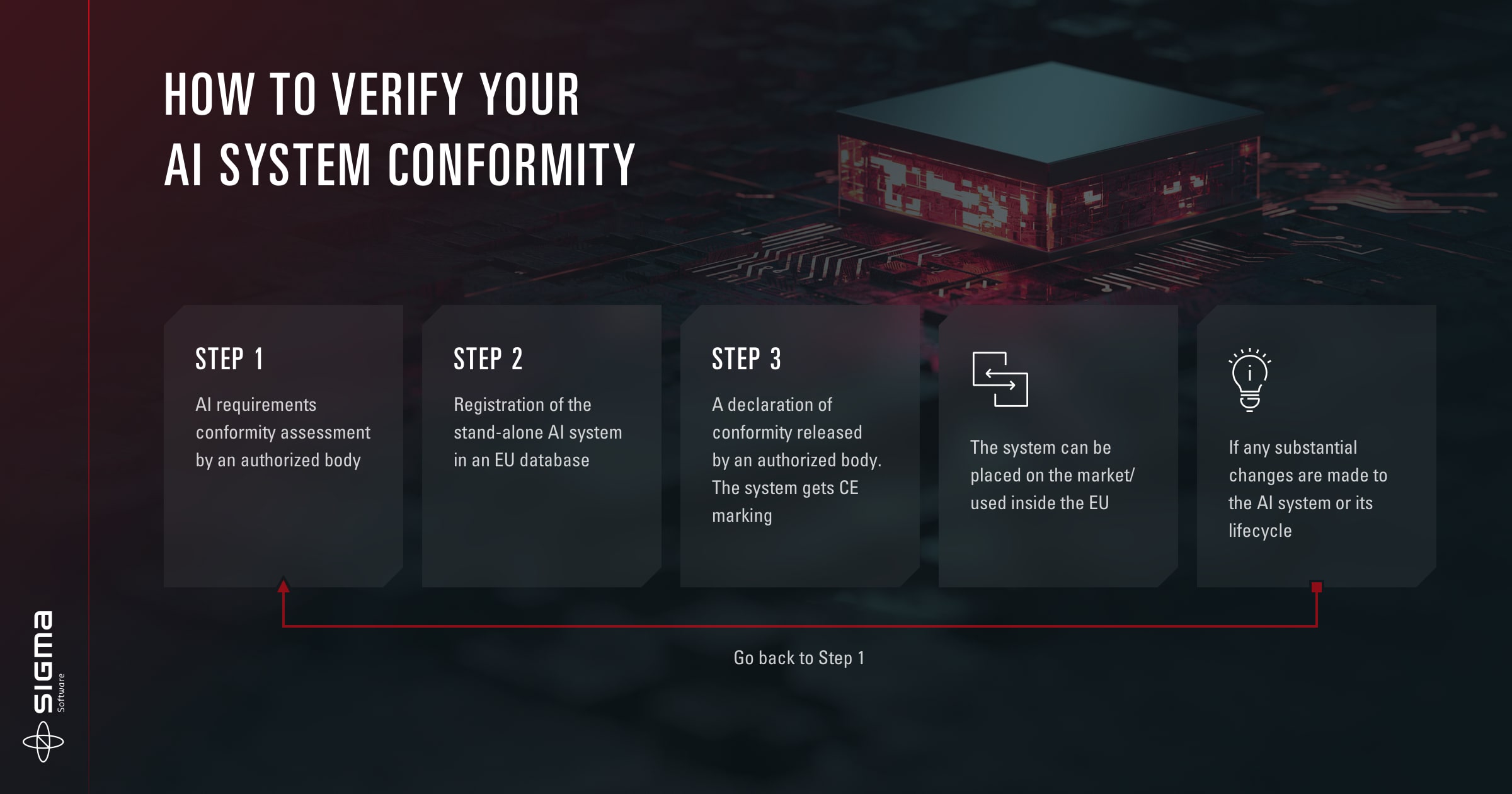

How long will it take to enforce the AI act procedures?

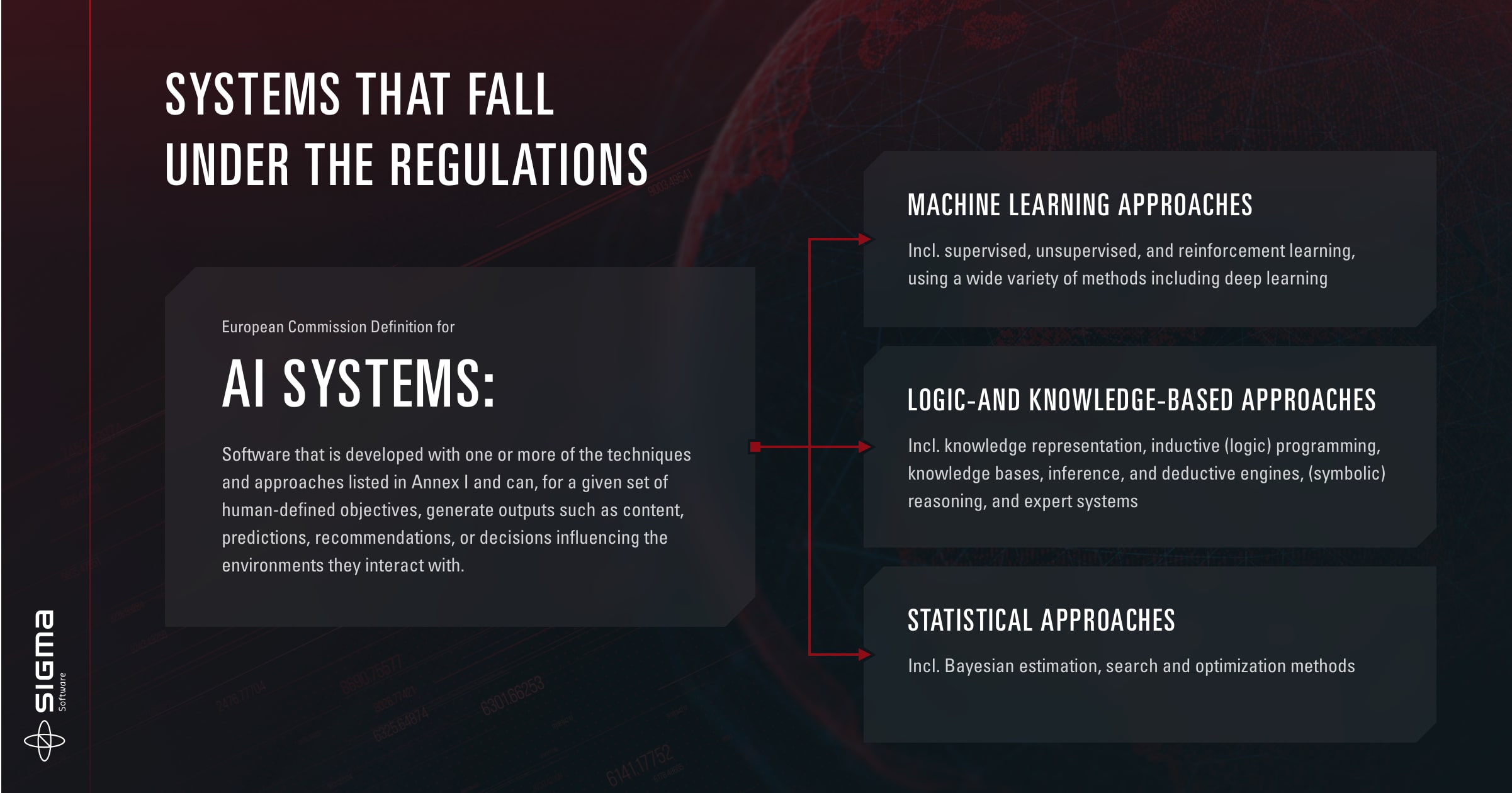

AI act compliance requirements

What are the high-risk AI systems and how are they impacted?

Penalties for non-compliance with the EU AI act