USA

Thank you for reaching out to Sigma Software!

Please fill the form below. Our team will contact you shortly.

Sigma Software has offices in multiple locations in Europe, Northern America, Asia, and Latin America.

USA

Sweden

Germany

Canada

Israel

Singapore

UAE

Australia

Austria

Ukraine

Poland

Argentina

Brazil

Bulgaria

Colombia

Czech Republic

Hungary

Mexico

Portugal

Romania

Uzbekistan

Nowadays Augmented Reality (AR) is getting more and more popular in mobile world. A lot of new AR applications are published every day. The reasons for that are quite obvious: the most information (about 90%) a person receives with the help of vision. By augmenting the real world with digital data, we enhance user’s visual perception, which means we let users interact with information layered over the real world. This new approach transforms the way users see and use the mobile applications. The main domain areas, where it can be applied, are advertising, entertainment, game industry, education, interior design, etc.

There are different types of AR, each one better fits different use cases. There are several SDKs, which provide various features for developers: Wikitude, Vuforia, OpenCV, ARCore, etc. This article is about recognition-based AR and the experience we got while working with Vuforia SDK.

Vuforia is a software platform for creating AR applications. It has a wide range of features and its recognition capabilities include objects, images, cylinders, text, boxes and more. Originally, it was launched in 2011, and it’s still under active development. As Vuforia is not an open source and free software, you need to have a license key to use it. But you can try it by using the Developer License Key and choose a pricing plan for your project later.

Object recognition allows you to detect and track 3D objects in an image or video sequence. In brief, it works as follows: an image is analyzed by recognition SDK to get a sufficient amount of feature points. It’s done by matching the image with reference images of the real object prepared beforehand. If there are enough feature points, then recognition is successful. Finally, the SDK gives you a 3D location and orientation of an object, so that you can do the augmentation.

After registering on Vuforia Developer Portal, you have access to resources provided by the platform: Vuforia SDK, samples, Vuforia Object Scanner, etc.

Object Targets are a digital representation of the features and geometry of a physical object. An Object Target is created by scanning a physical object using Vuforia Object Scanner. With this application, you scan an object from all sides to capture its vantage points that will be significant to your app’s user experience. It allows you to test your scan results or continue scanning. Finally, it produces an Object Data (*.OD) file that includes the source data required to define an Object Target in the Target Manager on Vuforia Developer Portal. Here are the screenshots of our scanning process:

![]()

![]()

Vuforia has several limitations for a physical object to be a target. It should be opaque, rigid, and contain contrast-based features. Also, it shouldn’t contain movable parts. The important thing is that Vuforia Object recognition is optimized for objects that can fit on a tabletop and are found indoors.

After scanning, you upload your Object Data file to the Vuforia Target Manager on Vuforia Developer Portal, where an Object Target is generated and can be packaged into a Device Database. Voila! You can now download the database and use it in your application.

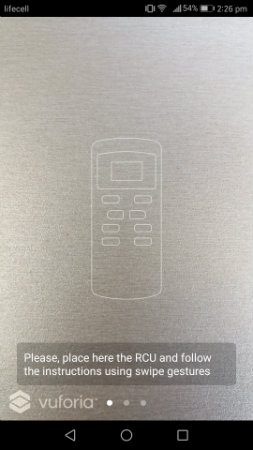

To try how it works we’ve decided to create an Android application – an interactive tutorial, which helps people learn how to use a remote control unit (RCU) of an air conditioner. The main use case is to guide the user to turn on the Cool mode. It’s quite a simple idea, which came up to us on a hot summer day, and it perfectly shows a possible usage of AR.

The application was developed using Android Studio, Vuforia SDK, OpenGL 2.0.

It guides the user through a set of steps he(she) needs to do to achieve the main goal – setup the correct mode. The Object Target here is the remote control unit (RCU). If you point your device camera at it, you will see the augmented instructions of what to do at this step.

From the technical point of view, it works the following way: when the SDK recognizes the RCU, it provides us with the projection and modelview matrices, based on which we can create the augmentation layer over the RCU. The origin of the coordinate system of the RCU is in the upper left corner. It was defined by the position of our RCU at the scanning phase. The coordinate scale is in millimeters. It means that we measure physical object element’s location in millimeters and then apply the proper OpenGL transformations for augmented layer according to the current step screen.

All augmentation in our application is done with pure OpenGL. However, for more complicated 3D scenes, it is better to use 3D libraries. Here are some application screenshots:

A picture is worth a thousand words and, with such tutorials, we help our users learn things in an easier and more understandable way. From the developer’s point of view, it was very interesting to dive into AR application development and see how it works under the hood. Moreover, it was such a pleasure to hear the feedback full of excitement from the app users.

See how it works:

Tetiana is a Software Developer at Sigma Software. She has been working as Android developer for more than 4 years. She is passionate about new technologies in mobile world. Lately, Tetiana had a chance to dive into AR/VR application development on Android and achieved amazing results in this area.

For decades, the manufacturing formula for success was the same – build great equipment, ship to the Client, and get the profit. Now, more OEMs are discovering ...

If you own a product line, run an embedded or connectivity team, or look after the cloud and data stack for connected devices, the EU Data Act probably didn’t a...

At Sigma Software, we constantly explore how emerging technologies can amplify engineering efficiency. Recently, a team from Sigma Software that develops Dovkol...

Would you like to view the site in German?

Switch to German