USA

Thank you for reaching out to Sigma Software!

Please fill the form below. Our team will contact you shortly.

Sigma Software has offices in multiple locations in Europe, Northern America, Asia, and Latin America.

USA

Sweden

Germany

Canada

Israel

Singapore

UAE

Australia

Austria

Ukraine

Poland

Argentina

Brazil

Bulgaria

Colombia

Czech Republic

Mexico

Portugal

Romania

Uzbekistan

This is the continuation of the topic from The Pillars of Functional Programming (Part 1), proceeding with the consequences of treating the functions as a first-class type.

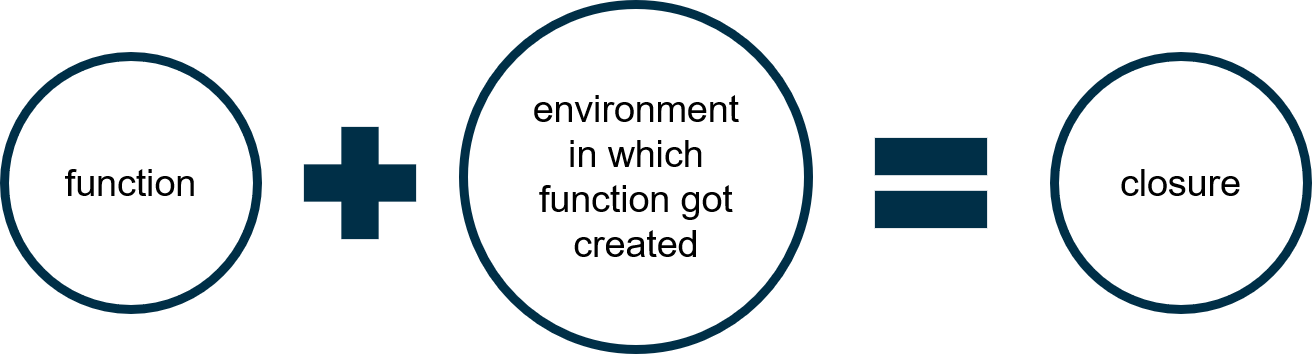

The concept of Closures may seem hard to grasp, but in fact, they aren’t a rocket science. From a programmer’s perspective, you should consider closure as a function created at runtime that has references to free variables. Alternatively, you may favor a definition on Image 5: function + environment in which it was created = closure.

Image 5. Closure

Interestingly, the first appearance of closures was not intentional: they were accidentally invented during bugfix in the LISP compiler by Steve Russel and received the name “FUNARG device” at the time.

The importance and appliance of closures is similar to objects in object-oriented languages, since they bind together data and behavior. Furthermore closures provide information hiding very similar to access specifier “private” in languages like C++, Java or C#, since every variable defined inside the closure function remains invisible outside of its scope.

It is possible to consider the function creating a closure as a class definition, while closure itself as an instance of such class.

Compare the following examples of utilizing closures in Clojure and F# with the class definition in C#:

; Clojure

(defn new-color [r g b]

(let [

mix (fn [c1 c2] ; private function

(-> (+ c1 c2) (#(/ % 2)) float Math/round))

; variable 'self' serves as substitute for keyword 'this' in C# or Java

self {

; public properties

:R r

:G g

:B b

; mix-with is a closure itself

; since it references free variables r g b

; here it serves as a public function

:mix-with (fn [other-color]

(new-color

(mix r (other-color :R))

(mix g (other-color :G))

(mix b (other-color :B))))}]

; return newly create object

self))

(def red (new-color 255 0 0))

(def white (new-color 255 255 255))

(def coral-red

((((red :mix-with) white)

:mix-with) red))// F#

open System

type Color = {

R: byte

G: byte

B: byte

MixWith: (Color -> Color)

}

let rec NewColor r g b =

let mix a b = // private function

((float)a + (float)b) / 2.0

|> Math.Round

|> byte

{ // public properties

R = r;

G = g;

B = b;

// MixWith is a closure itself

// since it references free variables r g b

// here it serves as a public function

MixWith = fun otherColor ->

NewColor

(mix r otherColor.R)

(mix g otherColor.G)

(mix b otherColor.B)

}

let red = NewColor 255uy 0uy 0uy

let white = NewColor 255uy 255uy 255uy

let coralRed = (red.MixWith white).MixWith red// C#

public class Color {

private byte Mix(byte c1, byte c2) =>

(byte)Math.Round(

((float)c1 + (float)c2) / 2);

public Color(byte r, byte g, byte b) {

this.R = r;

this.G = g;

this.B = b;

}

public byte R { get; }

public byte G { get; }

public byte B { get; }

public Color MixWith(Color otherColor) =>

new Color(

this.Mix(this.R, otherColor.R),

this.Mix(this.G, otherColor.G),

this.Mix(this.B, otherColor.B));

}

var red = new Color(255, 0, 0);

var white = new Color(255, 255, 255);

var coralRed = red.MixWith(white).MixWith(red);They are identical in functionality, while in Clojure and F# we achieved it using just first-class functions.

I must confess that emulating object systems with closures even being possible and quite laconic, is not an everyday practice. There is another much common application of closures called “partial application”, which simply means the process of breaking a single function into multiple ones of smaller arity.

The motivation of doing such transformation is simple. Quite often, an application is not supplied with all the arguments required to perform some operation immediately. Therefore, instead of passing them around until other arguments appear, it is possible to pack existing arguments into closure objects, or, in other words, partially apply a function to them.

Let’s rethink the previous example extracting the colors mixing function from the color type (which would be a more idiomatic approach):

; Clojure

(defrecord color [R G B])

(defn mix-colors [c1 c2]

(let [mix (fn [c1 c2]

(-> (+ c1 c2) (#(/ % 2)) float Math/round))]

(color.

(mix (:R c1) (:R c2))

(mix (:G c1) (:G c2))

(mix (:B c1) (:B c2)))))

; That is our simple palette:

(def red (color. 255 0 0))

(def green (color. 0 255 0))

(def blue (color. 0 0 255))

(def white (color. 255 255 255))

; Imagine brush color was set to red

(def brush-color red)

(comment

Now we will remember it in closure and use for mixing with other colors from palette

In other words, we will partially apply mix-colors to the color we know at the moment)

(def mix-with-palette (fn [color]

(comment

This function is closure

it references free variable brush-color)

(mix-colors brush-color color)))

(comment

Then apply mix-with-palette to other colors as soon as they available)

(def olive (mix-with-palette green))

(def purple (mix-with-palette blue))// F#

type Color = {

R: byte

G: byte

B: byte

}

let mixColors c1 c2 =

let mix a b =

((float)a + (float)b) / 2.0

|> System.Math.Round

|> byte

{ R = mix c1.R c2.R;

G = mix c1.G c2.G;

B = mix c1.B c2.B; }

// That's our simple palette:

let red = { R=255uy; G=0uy; B=0uy; }

let green = { R=0uy; G=255uy; B=0uy; }

let blue = { R=0uy; G=0uy; B=255uy; }

// Imagine brush color was set to red

let brushColor = red

(* Now we'll remember it in closure and use for mixing with other colors from palette

In other words we'll partially apply mixColors to the color we know at the moment *)

let mixWithPalette =

(* This function is closure

it references free variable brushColor *)

fun color -> mixColors brushColor color

(* Then apply mixWithPalette to other colors as soon as they awailable *)

let olive = mixWithPalette green

let purple = mixWithPalette blueThe term “partial application” is often conflated with the so-called “currying”. For the sake of simplicity, consider currying as a special case of a partial application that means a process of breaking a function of N arguments into a chain of N functions of a single argument.

Lazy evaluation is a way of computing, in which values are not calculated until they are required. This neat feature can severely speed up applications, reduce memory footprint, and allows to process infinite data. Lazy evaluation is attainable in multiple ways. It can be either an evaluation strategy supported by the language itself (i.e. Haskell), or it can be implemented via special data structures, which is especially easy with first-class functions available in any functional language.

Clojure, F# and C# used for examples in this article aren’t lazy languages by themselves, they employ an eager evaluation strategy, nevertheless they heavily utilize lazy evaluation and support it through their data structures.

; Clojure (def array (take 10 (repeatedly #(rand-int 1000)))) (def ordered-array (delay (sort array))); sort array on demand (force ordered-array); force evaluation

// F# let rn = new Random() // Generate array let arr = [| for _ in 1 .. 1000 -> rn.Next() |] // orderedArray will be sorted on demand let orderedArray = lazy (arr |> Array.sort) orderedArray.Force() // force evaluation

// C#

var rn = new Random();

var sequence = Enumerable

.Range(0, 1000)

.Select(i => rn.Next());

var orderedSequence = sequence

.OrderBy(a => a);

/* Most of the LINQ functions share lazy nature utilizing iterator pattern under the hood. Running this code will only define 'orderedSequence' but not evaluate it until someone will force it with ToArray or foreach statement */Using closures, it is quite easy to implement lazy data structures and infinite sequences by yourself:

; Clojure

(defn new-seq [next-value value]

; return function of action argument

(fn [action]

(cond ; something like a switch but better

(= action :next) ; if action is :next

; create a new generator with the next value

(new-seq next-value (next-value value))

(= action :value) ; if action is :value

value))) ; return current value

; sequence of powers of 2

(def powers-of-2 (new-seq (fn [x] (* 2 x)) 2))

(powers-of-2 :value) ; 2

(((powers-of-2 :next) :next) :value) ; 8

; doesn’t it look like enumerator.MoveNext().MoveNext().Current in C#?// F#

type Seq<'T> = {

value: 'T;

next: (unit -> Seq<'T>) }

let rec newSeq next value : Seq<'T> = {

// return current value

value = value;

// create a new sequence with the next value

next = (fun () -> newSeq next (next value))

}

// sequence of powers of 2

let pow2Seq = newSeq (fun x -> x * 2) 2

pow2Seq.value // 2

pow2Seq.next().next().value // 8

// doesn’t it look like enumerator.MoveNext().MoveNext().Current in C#?Even though the final result looks like enumerator in C#, the approach is qualitatively different, since we do not change a state of an object. Our data remains immutable and functions pure.

As it was already mentioned, changing the state of variables is not encouraged if simply not possible when using functional languages. Therefore, looping isn’t a suitable way of handling sequences of data, since loops rely on the changing of the counter state. An alternative is to utilize recursive function calls. In the functional world, recursion is considered as an idiomatic way to handle repeatable operations. Interestingly, the original article by John McCarthy describing LISP was called “Recursive Functions of Symbolic Expressions and Their Computation by Machine”.

In fact, both recursion and looping are functionally identical, and every recursive algorithm can be replaced with a loop and vice versa, while recursion is subjectively more expressive. There is a remarkable citation by L. Peter Deutsch regarding this matter: “To iterate is human, to recurse, divine.” While being a simple and elegant solution for certain problems, recursion has several technical issues to be addressed: firstly, possible overflow of the call stack and secondly, unnecessary memory consumption. Stack overflow appears to be a dread for most of the languages (including virtual machines like JVM & CLR), since they utilize the call stack of fixed size. On the other hand, some languages have no such limitation, notably Racket can dynamically extend the call stack, until it reaches a memory limit of the virtual machine. Nevertheless, there are other approaches to overcome unnecessary call stack consumption.

Often recursive functions end with the call to itself, such functions are called “tail-recursive” and can be easily optimized by a compiler to a simple loop.

Let’s examine different types of recursive functions in contrast, starting with directly recursive functions without tail calls:

; Clojure no tail recursion

(defn factorial-without-tail [x]

(if (< x 2)

1

(* x (factorial-without-tail (- x 1)))))

; (factorial-without-tail 100000)

; Exception java.lang.StackOverflowError// Clojure binary decompiled into Java

public static final Var const__2 = RT.var(

"clojure.core", "bigint");

public static Object invokeStatic(Object x) {

Object object;

if (Numbers.lt(x, 2L)) {

object = ((IFn)const__2.getRawRoot()).invoke(1L);

} else {

Object object2 = x;

Object object3 = x;

x = null;

object = Numbers.multiply(

object2,

// recursive, stack-consuming call

invokeStatic(Numbers.minus(object3, 1L)));

}

return object;

}// F# no tail recursion

let rec factorial (x : int) : bigint =

if x < 2

then 1I

else (bigint x) * factorial (x - 1)

// factorial 100000

// Process is terminating due to StackOverflowException// F# binary decompiled into C#

public static BigInteger factorial (int x) {

if (x < 2)

return BigInteger.One;

// recursive, stack-consuming call

return new BigInteger(x) * factorial (x - 1);

}These functions can be rewritten in a specific manner, so that compiler would detect a trivial possibility to convert the recursive calls into a simple loop:

; Clojure tail recursion

(defn factorial [x]

(loop [x x, acc (bigint 1)]

(if (< x 2)

acc

(recur (- x 1) (* x acc)))))

; (factorial 100000)

; Gigantic number// Binary decompiled into Java

public static final Var const__0 = RT.var(

"clojure.core", "bigint");

public static Object invokeStatic(Object x) {

Object object = x;

x = null;

Object x2 = object;

Object acc = ((IFn)const__0.getRawRoot()).invoke(1L);

// There is a loop on a place of recursion now!

do {

if (Numbers.lt(x2, 2L)) break;

Number number = Numbers.minus(x2, 1L);

Object object2 = x2;

x2 = null;

Object object3 = acc;

acc = null;

acc = Numbers.multiply(object2, object3);

x2 = number;

} while (true);

Object object4 = acc;

acc = null;

return object4;

}// F# tail recursion

let factorial (x : int) : bigint =

let rec loop x acc : bigint =

if x < 2

then acc

else loop (x - 1) ((bigint x) * acc)

loop x 1I

// factorial 100000

// Gigantic number// Binary decompiled into C#

internal static BigInteger loop(int x, BigInteger acc) {

int num;

// There is a loop on a place of recursion now!

for (; x >= 2; x = num) {

num = x - 1;

acc = new BigInteger(x) * acc;

}

return acc;

}

public static BigInteger factorial(int x) {

return loop(x, BigInteger.One);

}Although some algorithms cannot be trivially converted into a simple loop, especially mutually recursive ones:

; Clojure mutual recursion

(declare r2) ; forward reference

(defn r1 [i call-stack]

(let [call-stack (cons (str "r1 " i) call-stack)]

(if (< i 1)

call-stack

(r2 (- i 1) call-stack))))

(defn r2 [i call-stack]

(let [call-stack (cons (str "r2 " i) call-stack)]

(if (< i 1)

call-stack

(r1 (- i 1) call-stack))))

; (r1 10000 nill)

; Exception java.lang.StackOverflowError// F# mutual recursion

let rec r1 (i : int) (callStack : list<string>)=

let call = String.Format("r1 {0}", i)

let callStack = call::callStack

if i < 1

then callStack

else r2 (i - 1) callStack

and r2 (i : int) (callStack : list<string>) =

let call = String.Format("r2 {0}", i)

let callStack = call::callStack

if i < 0

then callStack

else r1 (i - 1) callStack

// r1 10000

(* Surprisingly F# compiler is able to optimize mutual tail-recursive functions! *)In case when tail call optimization is not an option, there is a universal cure called “trampoline”. Trampoline is just a special kind of function that calls the function that was supplied to it: if the value returned by the supplied function is a function as well, then the trampoline repeats until the returned value is not a function. Trampoline, while being a last-ditch action against stack consumption, requires target recursive functions to be rewritten in a specific manner:

; Clojure

; Trampoline source code:

(source trampoline)

(defn trampoline

"trampoline can be used to convert algorithms requiring mutual

recursion without stack consumption. Calls f with supplied args, if

any. If f returns a fn, calls that fn with no arguments, and

continues to repeat, until the return value is not a fn, then

returns that non-fn value. Note that if you want to return a fn as a

final value, you must wrap it in some data structure and unpack it

after trampoline returns."

{:added "1.0"

:static true}

([f]

(let [ret (f)]

(if (fn? ret)

(recur ret)

ret)))

([f & args]

(trampoline #(apply f args)))); Clojure

(declare r2)

(defn r1 [i call-stack]

(let [call-stack (cons (str "r1 " i) call-stack)]

(if (< i 1)

call-stack

; return a closure instead of recursive call

#(r2 (- i 1) call-stack))))

(defn r2 [i call-stack]

(let [call-stack (cons (str "r2 " i) call-stack)]

(if (< i 1)

call-stack

; return a closure instead of recursive call

#(r1 (- i 1) call-stack))))

(trampoline r1 10000 nil)

; OK! Prints the history of recursive callsIn conclusion about recursion, it is essential to point out that it is absolutely unnecessary to write recursive functions every time you need to simply transform a data sequence or perform iterative action. In every functional language these operations are generalized by functions like map, reduce, or similar ones:

; Clojure ; some numbers: (def data [1 2 3 4 5]) ; their sum: (def sum (reduce + data)) ; their percents of a sum: (def percents (map #(/ % sum) data)) ; print each percent into output (doseq [x percents] (println x))

// F#

(* this is just example, consider using rational numbers in real projects instead *)

// some numbers:

let data = [|1m;2m;3m;4m;5m|]

// their sum:

let sum = data |> Seq.reduce(

fun x y -> x + y)

// their percents of a sum:

let percents = data |> Seq.map(

fun x -> x / sum)

// print each percent into output

percents |> Seq.iter Console.WriteLine// C#

/* this is just example, consider using rational numbers in real projects instead */

var data = new decimal[] { 1, 2, 3, 4, 5 };

/* Indeed, LINQ borrowed functional 'reduce' in form of Aggregate */

var sum = data.Aggregate((x, y) => x + y);

/* and functional 'map' in form of Select */

var percents = data.Select(x => x / sum);

/* here we still have to iterate */

foreach (var p in percents)

Console.WriteLine(p);So far, we have briefly examined the four pillars of the functional paradigm:

Interestingly, most of the object-oriented features have respective alternatives in functional programming:

| Goal | Imperative/OOP | FP |

| Binding data & behavior | Class -> Object | Closures |

| Decoupling from concrete the implementation | Interface | Function signature |

| Encapsulation | Access modifiers | Immutability, Closures |

It is remarkable, considering the fact that the functional paradigm is notably older than the object-oriented one. From my perspective, it seems like the functional paradigm superseded its time with its features, while imperative languages had to evolve rediscovering them when facing the challenges of growing complexity.

Regarding this matter, the opinion of object-oriented pioneer Alan Key is quite thought-provoking: “OOP to me means only messaging, local retention and protection and hiding of state-process, and extreme late-binding of all things. It can be done in Smalltalk and in LISP”. His view appears different to a common perspective about what object-orientation truly means.

For me personally, OOP is remarkable for reasons rather philosophical than technical, while technically it is rather close if not inferior to functional programming. An object-oriented perspective is crucially important in modeling the system in the way of reducing the mismatch between the mental and software models of the system, while following functional practices may significantly simplify technical issues of reliability, scalability, and complexity.

This is not about superiority of one paradigm over another. You should treat languages like tools, more or less, suitable to perform a specific task.

However, taking into account the power of modern hardware and the expressive power of the functional paradigm, you should consider its application in practice.

While using functional languages in concrete commercial software may be a matter of discussion, it is undoubtedly should be a default choice of learning programming for beginners.

According to Dijkstra: “A fundamental reason for the preference is that functional programs are much more readily appreciated as mathematical objects than imperative ones, so that you can teach what rigorous reasoning about programs amounts to.”

Finally, functional programming, being declarative, provokes wishful thinking, which is a superior quality of having the own vision of what should be rather than adjusting yourself to existing circumstances.

If you have found this material interesting, consider the following resources:

http://www.paulgraham.com/articles.html(link is external)

https://clojure.org/(link is external)

Nikolay is an advanced .NET developer working with Sigma Software for many years. He is passionate about technology and its progress, so Nikolay actively participates in tech community events, acts as a mentor and trainer, competes and takes high places at international Hackathons.

Most AI assistant deployments start with a question: what can we give this system access to? The more data, the better the answers. That logic is correct – and ...

Biotech executives face a massive data problem. The issue is not gathering information. The issue is making that information useful. Clinical trials slow down b...

Healthcare leaders need to switch from billing for services to getting paid for patient health. Artificial intelligence offers specific tools to manage this shi...

Would you like to view the site in German?

Switch to German