Why Keep Historical Value-based Care Data?

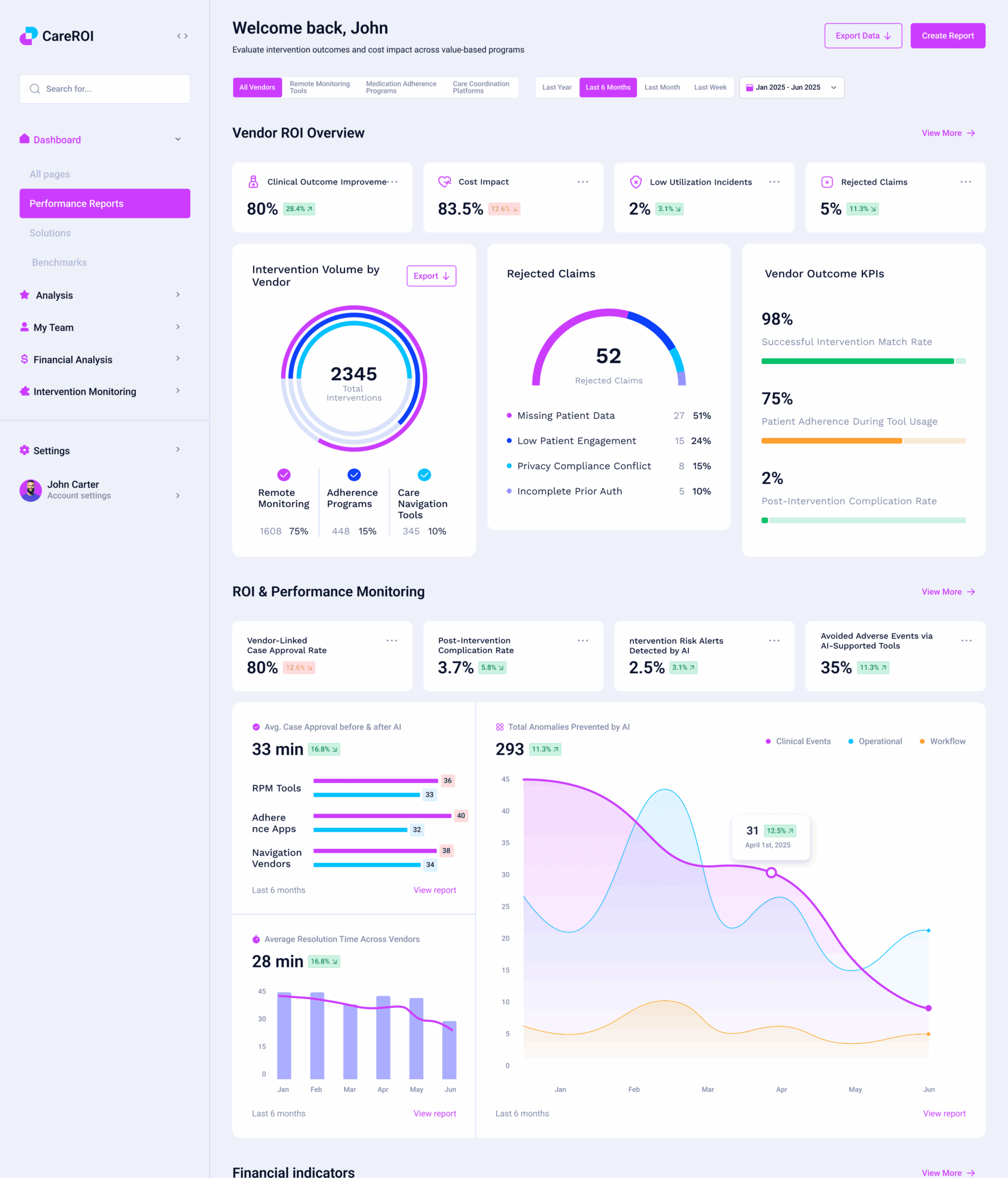

What a Healthcare Data Analytics Platform Must Deliver for Value-based Care

Health Care Data Analytics, ML, and KPI Operationalization

Governance, Privacy & Compliance for Healthcare Data Analytics Platforms

Problems that a Custom Healthcare Analytics Platform Can Solve

Value-based Care Data Analytics Implementation Roadmap

Procurement Checklist for CTOs: Custom Healthcare Data Analytics Solutions vs. Managed Services

Choosing the Right Healthcare Data Analytics Platform Partner