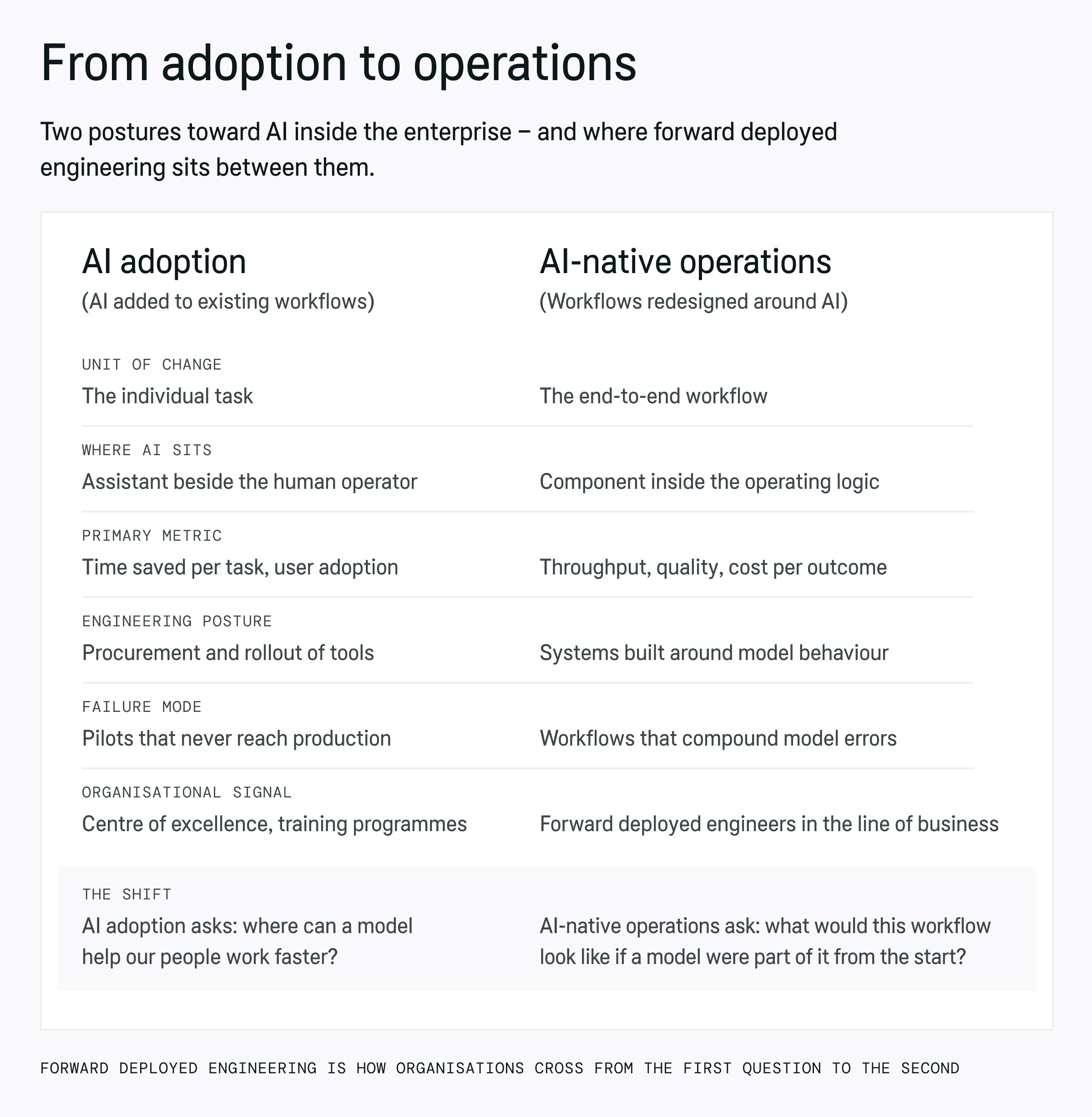

AI adoption vs. AI-native operations

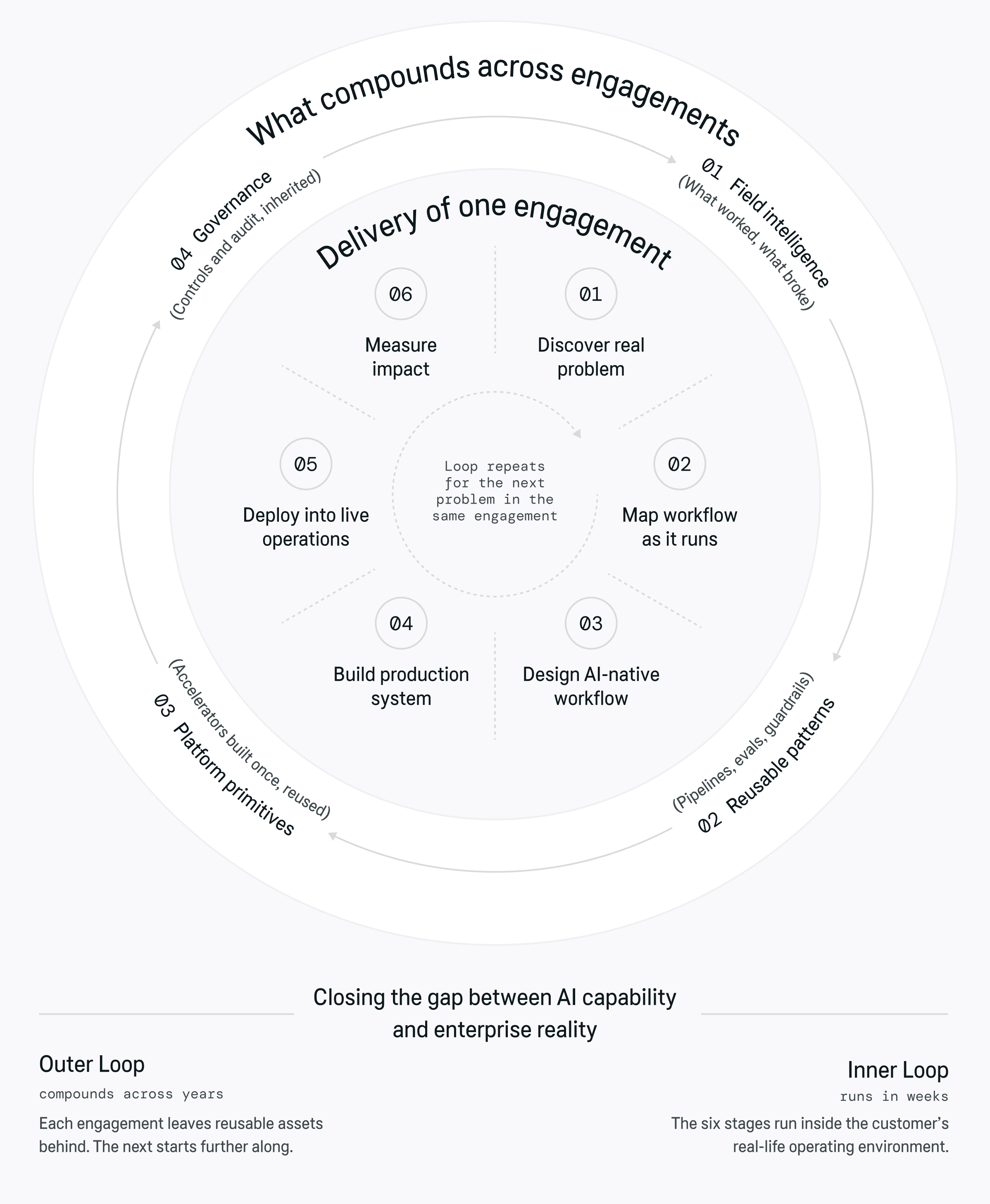

What Forward Deployed Engineering is

How Forward Deployed Engineering changes AI delivery

Forward Deployed Engineering vs consulting, business analysis, and solutions engineering

How the domain knowledge gap is addressed

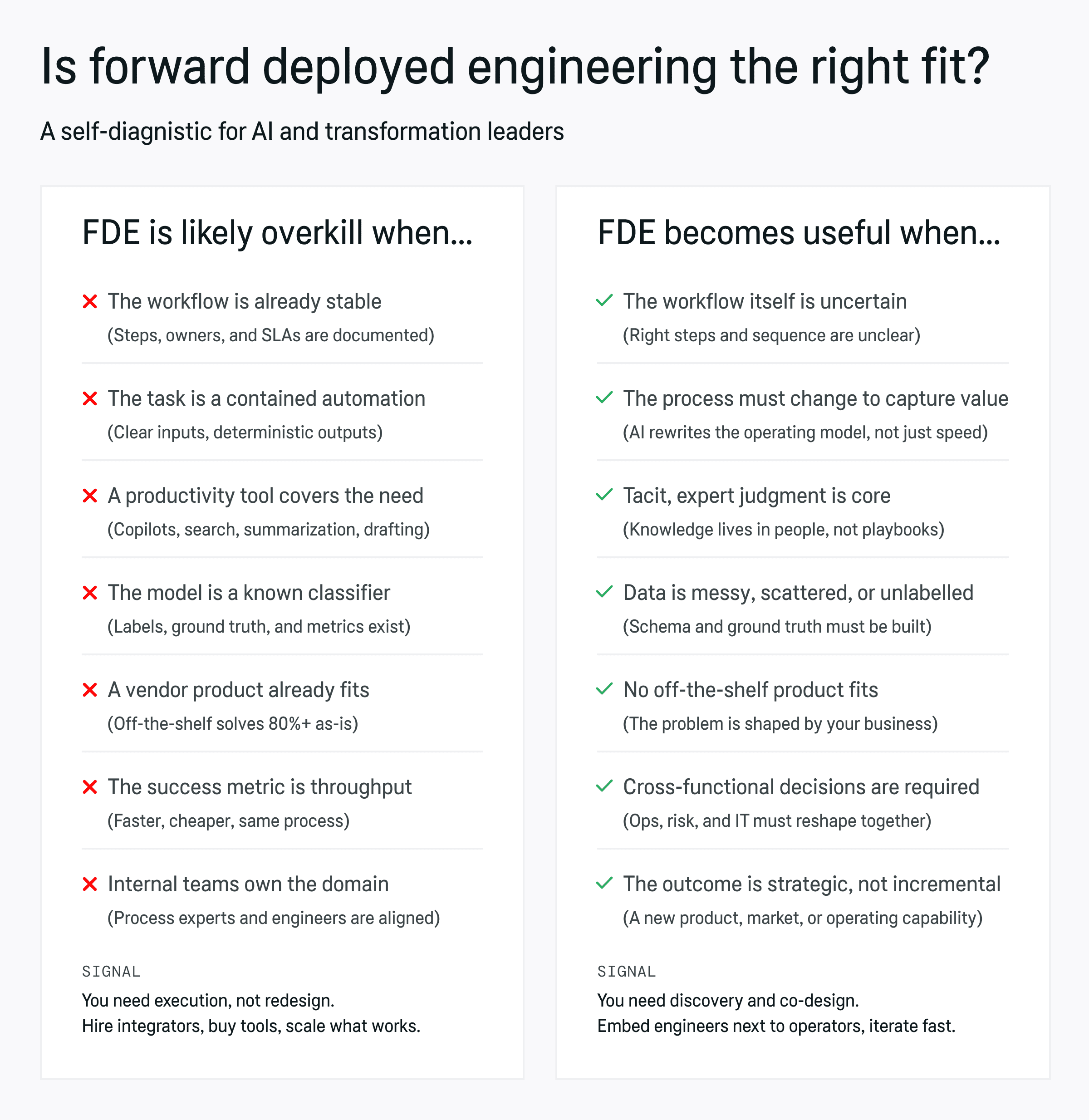

Where Forward Deployed Engineering is useful, and where it is overkill