Why “AI-generated tests” usually don’t solve the legacy problem

USA

Thank you for reaching out to Sigma Software!

Please fill the form below. Our team will contact you shortly.

Sigma Software has offices in multiple locations in Europe, Northern America, Asia, and Latin America.

USA

Sweden

Germany

Canada

Israel

Singapore

UAE

Australia

Austria

Ukraine

Poland

Argentina

Brazil

Bulgaria

Colombia

Czech Republic

Mexico

Portugal

Romania

Uzbekistan

Many mid-size and large organizations depend on at least one legacy system no one wants to touch: often 10–20 years old, with outdated documentation and engineers long gone. Any change feels like gambling. This fear, not technology, is what truly blocks modernization.

Why “AI-generated tests” usually don’t solve the legacy problem

Anatoliy Kochetov, Chief Operating Officer, Sigma Software

Maxim Kovtun, Chief Innovation Officer, Sigma Software

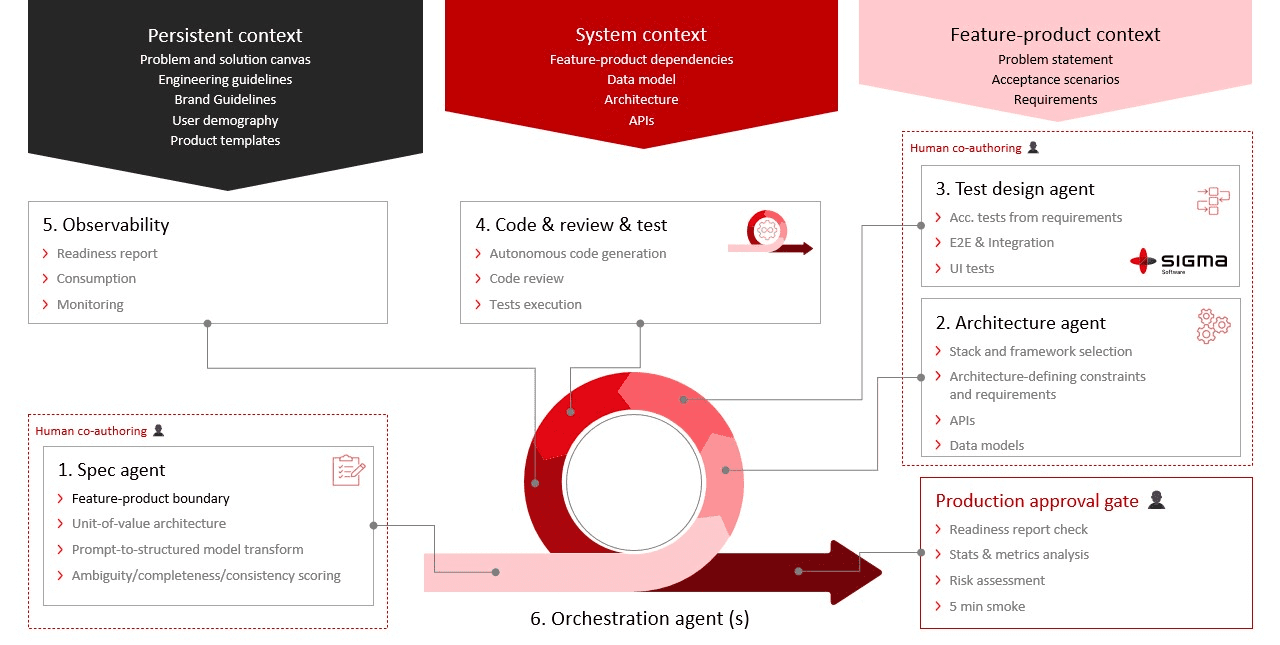

Sigma Software and Datuum developed a joint technology blueprint: a practical, AI-native approach to behavior reconstruction and test coverage that fits how legacy modernization actually happens — incrementally, with uncertainty, and without the luxury of starting from scratch.

Over time, legacy systems become black boxes. Technologies get deprecated, security risks grow, and experts capable of maintaining these stacks are increasingly rare. Avoiding change is not an option — but neither is betting on an expensive, one-size-fits-all platform that wasn’t built for your system’s specific quirks and history.

A wave of tools promises the same shortcut: feed legacy code into AI, generate tests, and modernize safely. It sounds good. Until you try it.

Legacy code is rarely testable by design. Tight coupling, implicit dependencies, and hidden side effects make unit tests fragile or meaningless. AI-generated tests commonly validate the wrong things, locking in implementation details instead of real behavior. When a system already behaves incorrectly, AI simply codifies that behavior. Technical debt becomes tested technical debt.

The problem is not test quality. The problem is the starting point.

Modernizing a legacy system doesn’t start with tests. It starts with understanding how the system actually behaves.

AI helps here, but not by generating tests from code. Instead, it reconstructs behavior from multiple signals: static artifacts (specs, confluence pages, test cases, code, configs), historical artifacts (tickets, change history), and runtime signals (logs, metrics, production traffic). These are correlated into a behavioral model that engineers review and validate before any modernization begins.

Only after behavior is reconstructed do we generate end-to-end tests that lock in observable outcomes rather than internal implementation. Tests protect what users, integrations, and downstream systems experience, not the hidden plumbing. It’s the difference between documenting every pipe in a building versus documenting what happens when you turn the tap.

At Sigma Software, we apply this in production today. Our end-to-end test agent automates behavior reconstruction and test generation as part of AI-native, multi-agent development workflows, with confidence scoring and human-in-the-loop review built in before modernization begins.

Until recently, reconstructing system behavior required extensive manual analysis and tribal knowledge that often no longer existed. AI has changed that. Modern tools can reconstruct behavior from incomplete artifacts, correlate runtime signals with historical changes, and surface uncertainty with measurable confidence.

Organizations can now modernize decades-old platforms safely — without downtime, blind rewrites, or dependence on code no one fully understands.

This is part of why the partnership between Sigma Software and Datuum has become one of Sigma Software’s most sought-after AI offerings: behavior-first, AI-generated test coverage delivered as a fixed-scope engagement, a dedicated team of AI-enabled engineers, or a full transformation of customer teams toward AI-native SDLC practices.

Learn more at sigmasoftware.ai

Sigma Software Group provides IT services to enterprises, software product houses, and startups. Working since 2002, we have build deep domain knowledge in AdTech, automotive, aviation, gaming industry, telecom, e-learning, FinTech, PropTech. We constantly work to enrich our expertise with machine learning, cybersecurity, AR/VR, IoT, and other technologies. Here we share insights into tech news, software engineering tips, business methods, and company life.

Linkedin profileWhy “AI-generated tests” usually don’t solve the legacy problem

The shift from fee-for-service to value-based care changes the math for medical organizations. You only get paid when patients stay healthy and avoid unnecessar...

Legacy systems send a chill down the spine of every developer, manager, and director. Everyone knows change is needed, and everyone knows change is hard. Refact...

Once organizations adopt SBOMs, the next step is to roll them out across the teams. This is when the initiatives tend to slow down. In many cases, the challenge...

Would you like to view the site in German?

Switch to German